We are witnessing live the evolution of artificial intelligence (AI)-driven imagers. Not only are there more and more such proposals, but many of them are crossing the research barrier, becoming available to all users. A little over a month ago, the open betas of Midjourney and DALL-E 2 were announced. Now it is the turn of StableDiffusion.

You may not have heard of StableDiffusion, but it is a diffusion model capable of generating photorealistic images from any text developed by a startup called Stability AI together with researchers from the University of Heidelberg (Germany). The images generated by this alternative have an impressive level of detail and are more similar to DALL-E 2 than to other proposals such as Midjourney, whose essence is more artistic and less realistic.

StableDiffusion, available to everyone

Like other proposals, StableDiffusion was trained with data from the Internet. In this case, LAION-Aesthetics, a set of millions of images filtered and classified by AI, was used to teach the model to learn the associations between written concepts and images. The company says that while this technique is very effective, it is exposed to “social biases and unsafe content available on the web”, so they ask that it be used responsibly.

After initially being available to project collaborators and selected researchers, StableDiffusion is now accessible to everyone. The stable version is available through DreamStudio, a front-end, and a paid API. The good news is that registration is free, and upon login, you receive 200 credits for image generation. Although, yes, 1 credit does not always equal 1 image. Let’s see.

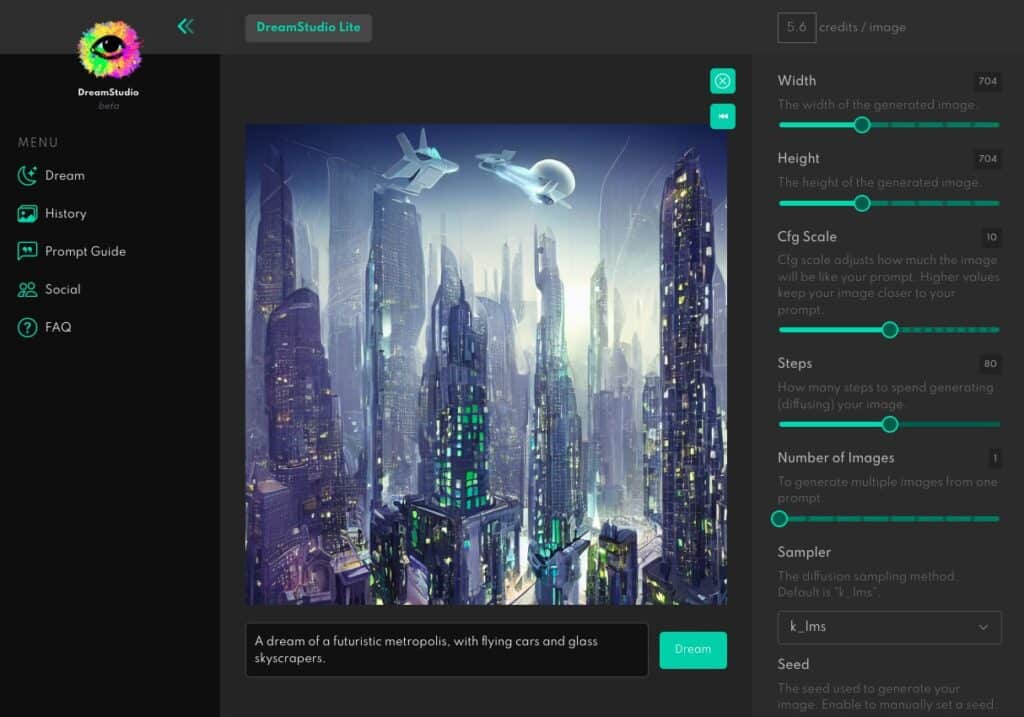

When you enter DreamStudio you will find a simple and friendly interface. To generate an image you simply enter the desired text (in English) in the “A dream of…” box and adjust the image width, height, and other generation parameters. As you move the controls the number of credits you will have to pay will increase or decrease.

In our test, for example, we asked for “a futuristic metropolis, with flying cars and glass skyscrapers”, with the settings you can see in the screenshot. DreamStudio has “priced” their work at 11 credits. It looked good to us, we clicked on Dream and it threw up the image above. But this is not the only option available.

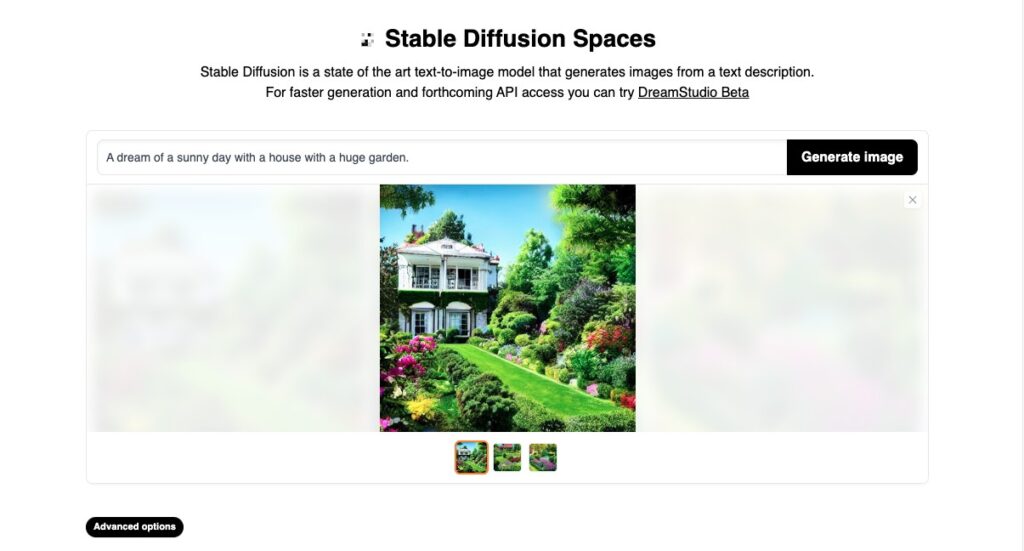

The company has also publicly released a demo that is much easier to use. In this case, you just enter the text and click Generate image. Here we have tested with “a sunny day with a house with a huge garden”, and the result has been quite realistic. As we can see, this is one more tool to unleash our creativity and evaluate the progress of these systems.

It should be noted that the creators of StableDiffusion assure us that they will continue working to improve the capabilities of the model, including the capabilities to eliminate unwanted results. A version that can be run locally will be released at a later date. However, it will require at least graphics cards such as the Nvidia GeForce GTX 1660.

If you think there is a lot of AI available and you don’t know where to start, we recommend you to check this interesting Twitter thread by researcher Fabian Stelzer, who has bought the results of DALL-E 2, Midjourney, and Stable Diffusion.

This post may contain affiliate links, which means that I may receive a commission if you make a purchase using these links. As an Amazon Associate, I earn from qualifying purchases.